Why Benchmark Demos Fail on Real Scanned Documents

Why OCR benchmarks often look good on demo files and fall apart on real scanned documents, and what to test instead.

Why Benchmark Demos Fail on Real Scanned Documents

Benchmark demos fail on real scanned documents for one simple reason:

the files in the benchmark are often cleaner than the files in production.

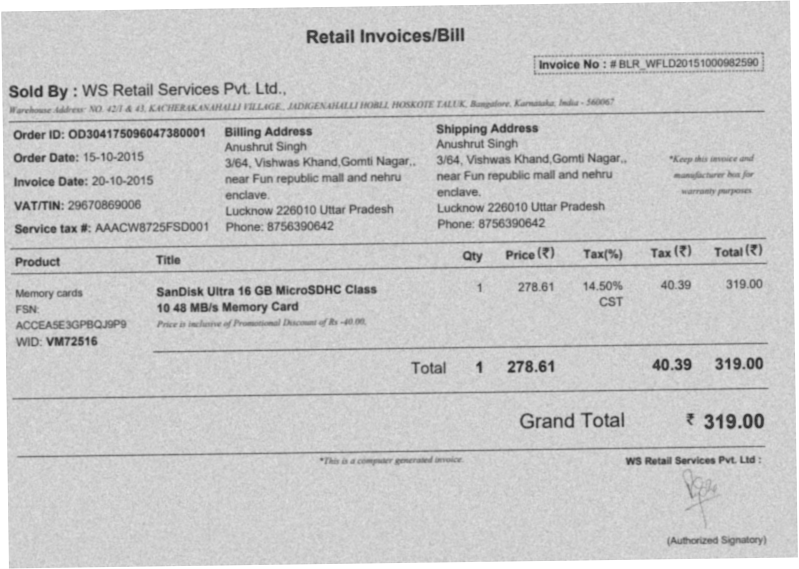

A page like this tells you more about production fit than ten clean demo PDFs ever will.

A page like this tells you more about production fit than ten clean demo PDFs ever will.

That means:

- fewer layout problems

- better image quality

- easier tables

- less downstream cleanup

What Real Files Add

Real scanned documents bring:

- low contrast

- skew and blur

- embedded images inside PDFs

- handwritten fields

- inconsistent layouts

That is where many polished demos stop looking so polished.

Real production queues also add workflow pressure that demo sets rarely model:

- page-level exceptions that need review

- multilingual labels

- mixed document families in one batch

- downstream systems that require a strict JSON contract

So the true question is not “can the model read a clean scan?” It is “what happens when the hard pages show up?”

Why Demo Benchmarks Mislead

Demo benchmarks often hide the exact variables that decide whether an OCR rollout works:

- how image-heavy the PDFs really are

- whether rows and tables stay intact

- how much cleanup still happens after extraction

- whether the output is reviewable when something goes wrong

That is why a pretty benchmark can still produce an ugly rollout.

A Better Test

Use:

- clean PDFs

- hybrid PDFs

- grayscale scans

- photo-like captures

Then measure:

- row-level extraction quality

- output-contract reliability

- reviewability

- cleanup burden after OCR

If the workflow feeds finance, logistics, underwriting, or another operational system, also measure whether the output contract already fits the downstream schema or still needs another parsing layer.

What A Useful Evaluation Batch Looks Like

Build a set that includes:

- A clean digital PDF

- A grayscale scan

- A warped phone capture

- A hybrid PDF with embedded image regions

- A page with tables or multiline rows

That mix will tell you far more than a sanitized demo set.

Minimum Scorecard For A Real Test

At minimum, score:

- text and field accuracy

- row or table fidelity

- downstream JSON fit

- reviewability for exceptions

- cleanup burden after extraction

That last line is the one most polished demos hide, and it is often the one that matters most in production.

Where LeapOCR Fits

LeapOCR is positioned around this exact production-first view of OCR:

- benchmark-backed model families

- markdown when humans still need to inspect the page

- schema-fit JSON when systems need a strict record

- optional instructions and bounding boxes when hard pages need more control

That matters because the winning product is not the one with the prettiest sample. It is the one that removes the most operational cleanup from real files.

Pages That Matter More Than The Demo

- PDF Parser API for Scanned Documents

- Best OCR APIs for Scanned PDFs

- Invoice OCR Benchmark: LeapOCR vs Veryfi vs Mindee vs Nanonets

Final Take

The benchmark that matters is the one that makes your ugliest real files part of the test set.

Try LeapOCR on your own documents

Start with 100 free credits and see how your workflow holds up on real files.

Eligible paid plans include a 3-day trial with 100 credits after you add a credit card, so you can test actual PDFs, scans, and forms before committing to a rollout.

Keep reading

Related notes for the same operating context

More implementation guides, benchmarks, and workflow notes for teams building document pipelines.

Best Invoice OCR APIs for Developers

An honest guide to invoice OCR APIs for developers, with a focus on workflow ownership, line items, and downstream fit.

Best OCR APIs for Scanned PDFs

An honest guide to the best OCR APIs for scanned PDFs, with emphasis on messy file quality, output shape, and production workflows.

Best PDF Parser APIs for Developers Handling Scanned Documents

An honest roundup of developer-facing PDF parser and OCR tools, focused on where they fit best and where scanned, messy documents change the decision.