Invoice OCR Benchmark: LeapOCR vs Veryfi vs Mindee vs Nanonets

A practical benchmark framework for comparing invoice OCR tools on real files, with emphasis on line items, messy scans, and downstream fit.

Invoice OCR Benchmark: LeapOCR vs Veryfi vs Mindee vs Nanonets

Most invoice OCR benchmarks are easier on vendors than real finance workflows are.

They use clean files, small samples, and success criteria that stop at “did text come back?” That is not the standard AP teams actually care about.

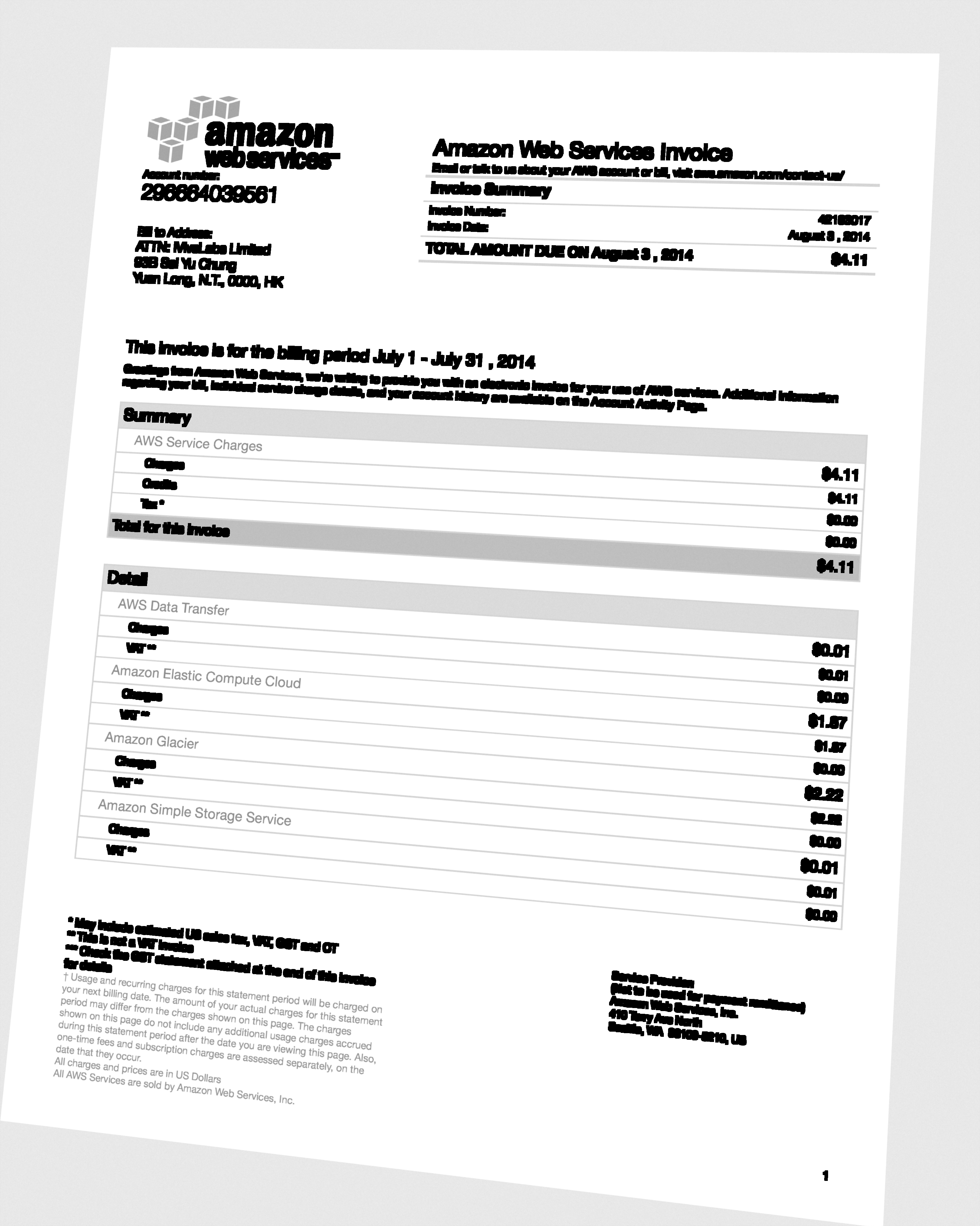

A realistic benchmark should include files like this, not only polished digital invoices.

A realistic benchmark should include files like this, not only polished digital invoices.

The better benchmark asks:

- Did header fields survive extraction?

- Did line items remain structured?

- Did the tool handle messy scans and hybrid PDFs?

- How much cleanup remained before the invoice could be posted?

Do Not Publish Fake Scores

The least useful benchmarks declare a winner without showing the test set, the scoring method, or the workflow assumptions.

The honest version is simpler:

- compare on your own document mix

- publish the scorecard

- avoid claiming precision you did not measure

This post is a framework for that kind of evaluation.

The Four Tools Worth Comparing

They overlap on invoice OCR, but they sit at different points in the product stack.

In broad terms:

- LeapOCR is strongest when messy-document realism and downstream fit matter most.

- Veryfi is strongest when the workflow is tightly finance- and invoice-centered.

- Mindee is strong when teams want broader developer packaging and a polished API motion.

- Nanonets is strong when buyers want more workflow SaaS around OCR.

What To Benchmark

Use at least four document types:

- clean digital invoices

- hybrid PDFs with embedded-image regions

- older grayscale scans

- camera-like captures or low-quality uploads

Then score each tool on:

- header field accuracy

- line-item preservation

- tax and total reliability

- downstream JSON fit

- reviewability for exceptions

If line items matter for your workflow, weight them heavily. A tool that gets totals right but breaks the row array can still create a large AP cleanup burden.

Why This Benchmark Is More Useful

This framework matters because most teams do not switch vendors over clean sample files.

They switch when:

- line items break

- scans stop working

- JSON no longer fits the downstream workflow

- review queues get slower instead of faster

That is also why workflow-specific capabilities such as Invoice to JSON API and Invoice Line Item Extraction API matter more than generic OCR alone.

A Suggested Scorecard

Use a simple scoring sheet with categories like:

| Category | Why it matters |

|---|---|

| Header accuracy | Determines whether the invoice can be identified and routed |

| Line-item fidelity | Determines whether AP can trust the detail rows |

| Totals and tax consistency | Catches the most dangerous posting mistakes |

| Reviewability | Determines whether exceptions are fast or painful to resolve |

| JSON fit | Measures how little transformation remains before writeback |

| Cleanup burden | Captures the real operational cost after extraction |

You can keep the numbers simple. The important part is using the same rubric across all tools.

How The Categories Break Down

In broad terms:

- Veryfi is strongest when the workflow is tightly finance- and invoice-centered.

- Mindee is strong when teams want broader developer packaging and docs.

- Nanonets is strong when the buyer wants more workflow SaaS around OCR.

- LeapOCR is strongest when messy-document realism and downstream fit matter more than platform breadth.

Where LeapOCR Pulls Ahead

LeapOCR becomes especially compelling when the benchmark includes:

- ugly scans and hybrid PDFs

- line-item-heavy invoices

- workflows that need markdown for review and JSON for systems

- cases where instructions or bounding boxes reduce exception-handling time

Practical evaluation factors:

- official SDKs for JavaScript, Python, Go, and PHP mean integration cost stays low

- credit-based pricing with a 100-credit trial makes it easy to run a real pilot before committing

- reusable templates let teams standardize invoice extraction once and reuse the configuration across suppliers

That combination matters because the real operational cost often sits in the review queue, not just the extraction call.

The Practical Test

If you run this benchmark honestly, the strongest tool is usually the one that leaves the smallest cleanup burden after extraction.

That means:

- fewer row-level repairs

- less manual validation

- better output contracts

- easier exception handling

If a tool only wins on a clean sample set, but loses once the workload includes real supplier variation, it is not the strongest production choice.

What To Do Next

If you are running your own evaluation, pair this post with:

- Invoice OCR API

- Invoice to JSON API

- Invoice Line Item Extraction API

- Best Invoice OCR APIs for Developers

Final Take

The best invoice OCR benchmark is the one that measures how much work remains after the OCR call.

That is where real buying decisions usually get made.

Try LeapOCR on your own documents

Start with 100 free credits and see how your workflow holds up on real files.

Eligible paid plans include a 3-day trial with 100 credits after you add a credit card, so you can test actual PDFs, scans, and forms before committing to a rollout.

Keep reading

Related notes for the same operating context

More implementation guides, benchmarks, and workflow notes for teams building document pipelines.

Best Invoice OCR APIs for Accounts Payable Teams in 2026

An honest guide to invoice OCR APIs for AP teams, including when to choose a finance-specific tool, a broader workflow platform, or a schema-first OCR layer.

Best OCR APIs for Developers in 2026

An honest guide to the strongest OCR APIs for developers, including when to choose a parsing-first tool, an invoice-focused API, or a schema-first OCR layer.

Why Benchmark Demos Fail on Real Scanned Documents

Why OCR benchmarks often look good on demo files and fall apart on real scanned documents, and what to test instead.