How to Extract Bank Statement Data to JSON

A practical guide to converting bank statements into JSON with balances, metadata, and transaction rows that downstream systems can actually use.

How to Extract Bank Statement Data to JSON

Bank statement extraction becomes useful only when the output is more than readable text.

Most real workflows need:

- account metadata

- statement period

- opening and closing balances

- transaction rows

That means the target format is usually JSON, not only OCR text.

Most statement workflows fail when rows lose structure. Dates, descriptions, amounts, and balances have to stay attached.

Most statement workflows fail when rows lose structure. Dates, descriptions, amounts, and balances have to stay attached.

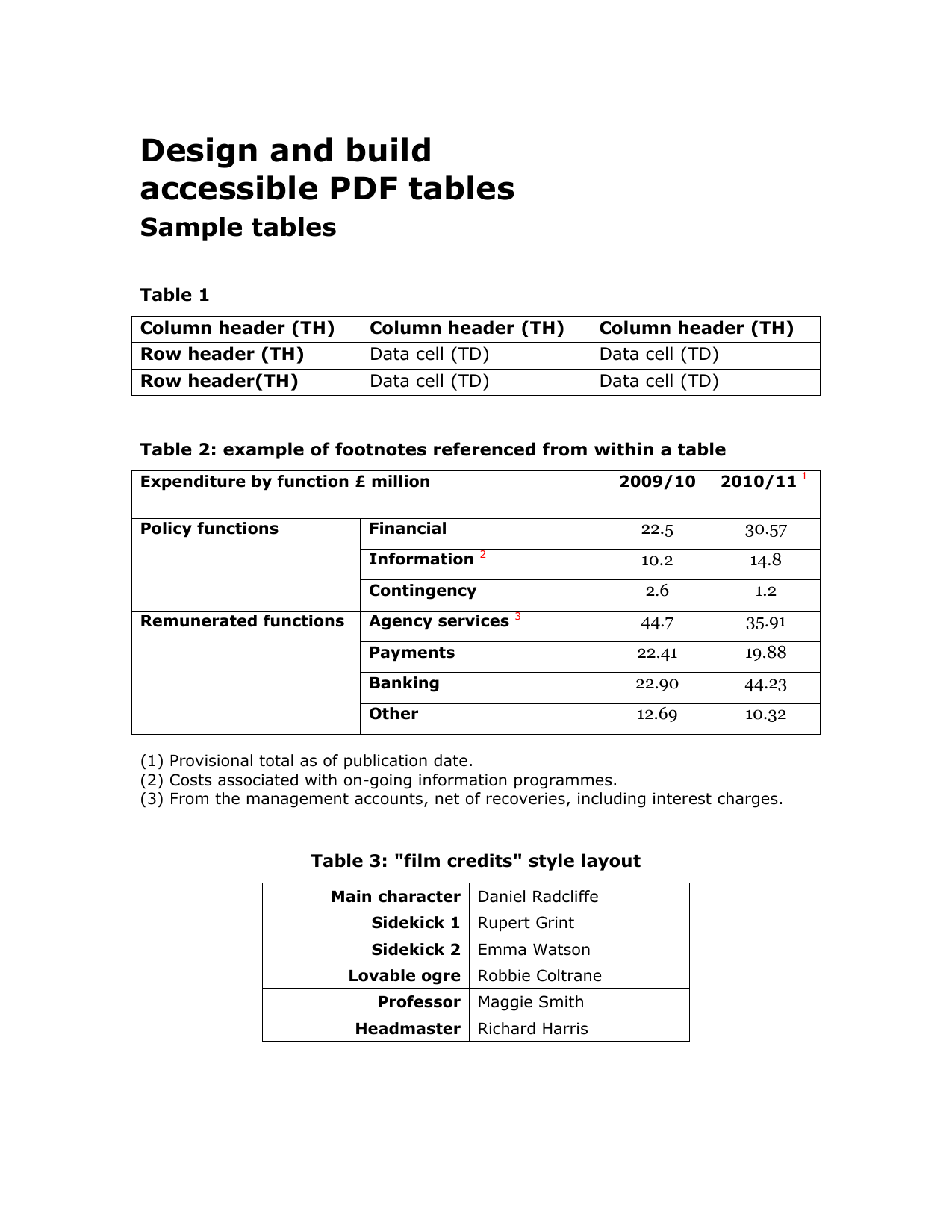

What The JSON Should Include

A useful statement object often looks like this:

{

"account_holder": "Northwind LLC",

"statement_period": "2026-02-01 to 2026-02-29",

"opening_balance": 14520.33,

"closing_balance": 18104.77,

"transactions": [

{

"posted_at": "2026-02-07",

"description": "ACH CREDIT - Client Payment",

"amount": 4800.0,

"direction": "credit"

}

]

}In real systems, you will usually want more structure than the simplified example above. It is often worth splitting the statement period into start_date and end_date, preserving the running balance when available, and deciding upfront how debits and credits should be represented.

For example:

- use negative numbers for debits and positive numbers for credits

- keep the original description plus a normalized description

- normalize all dates to one format before storage

- preserve currency explicitly if the workflow spans regions

Common Failure Modes

Bank statement extraction usually breaks when:

- transaction rows flatten into text

- balances are not explicit fields

- scans degrade table accuracy

- the workflow stops at conversion instead of structured extraction

That is why Bank Statement OCR API is a better fit than a generic PDF parser when the output needs to feed reconciliation or underwriting.

Start From The Workflow, Not The File

Before you define a schema, decide what the JSON needs to do next.

Examples:

- Reconciliation needs transaction rows and balances.

- Underwriting may need monthly totals, NSF events, and account metadata.

- A lending workflow may need normalized transaction categories.

The right schema is the narrowest one that still supports the decision you are trying to automate.

A Better Workflow

The safer extraction pattern is:

- Capture statement metadata.

- Capture opening and closing balances.

- Extract transactions as an array.

- Keep markdown available for review when needed.

That fourth step is underrated. Many teams try to choose between readable output and structured output too early. In practice, finance workflows often want both:

- JSON for the system

- markdown for a reviewer who needs to inspect the source quickly

LeapOCR supports both paths, and can also add bounding boxes when a review tool needs to highlight the exact row or total that triggered an exception.

A Practical Schema Checklist

For most bank statement pipelines, define:

- account holder or account label

- account number or masked identifier

- statement start and end dates

- opening and closing balances

- an array of transactions

Each transaction should usually include:

- posting date

- description

- amount

- debit or credit direction

- running balance when present

If the statements can arrive in multiple languages, it is also worth deciding whether your stored JSON should preserve the source language or normalize descriptions into one language during extraction.

When A Parser Is Not Enough

Tools like PDF Vector Bank Statement Converter can be useful for top-of-funnel conversion or readable parsing.

But many finance workflows need one step further: structured JSON shaped for another system.

Validation Matters More Than Another Parsing Pass

Do not write statement JSON downstream without basic validation.

At minimum, validate:

- required metadata exists

- opening and closing balances parse as numbers

- transaction dates are real dates

- debit and credit direction is consistent with amount sign

- row order makes sense for the statement period

This is one reason schema-first extraction is useful. It forces the workflow to think about the target record before the OCR result leaks into downstream code.

Where LeapOCR Fits

LeapOCR is useful when the workflow needs more than generic conversion:

- markdown for review

- schema-fit JSON for systems

- instructions like “normalize dates to YYYY-MM-DD” or “translate descriptions to English”

- optional bounding boxes when reviewers need geometry on selected rows or balances

It is also useful when bank statements arrive through the same intake path as other documents. Since LeapOCR supports 100+ file formats, teams can keep one ingestion layer across statements, invoices, forms, and mixed back-office files.

Useful LeapOCR Pages

Final Take

The best statement-extraction workflow is the one that leaves you with a usable JSON object, not another text parsing project.

Try LeapOCR on your own documents

Start with 100 free credits and see how your workflow holds up on real files.

Eligible paid plans include a 3-day trial with 100 credits after you add a credit card, so you can test actual PDFs, scans, and forms before committing to a rollout.

Keep reading

Related notes for the same operating context

More implementation guides, benchmarks, and workflow notes for teams building document pipelines.

Bank Statement OCR vs PDF Parser

A practical comparison of bank statement OCR and PDF parser tools, with emphasis on transaction rows, balances, and downstream fit.

Best Bank Statement OCR APIs in 2026

An honest look at the strongest bank statement OCR APIs and parser-style alternatives, with a focus on transaction rows, balances, and downstream workflow fit.

Best Invoice OCR APIs for Developers

An honest guide to invoice OCR APIs for developers, with a focus on workflow ownership, line items, and downstream fit.